[Overlay Networking] Part 2 - VTEPs and Software

September 4, 2013 in Systems4 minutes

In the previous post, we discussed the role of the overlay network, and the virtual switches they connect to. In this post, we’re going to talk about a few additional components.

The Role of the Hardware VTEP

There’s been a lot of talk about VTEP, and how virtually every networking vendor but Cisco is part of this elaborate ecosystem of vendors that contribute to the angelic glory that is NSX. Let’s put the politics aside and talk about what (specifically hardware) VTEPs could do, even if they’re not doing them right now (announced and shipping are two very different things. :) )

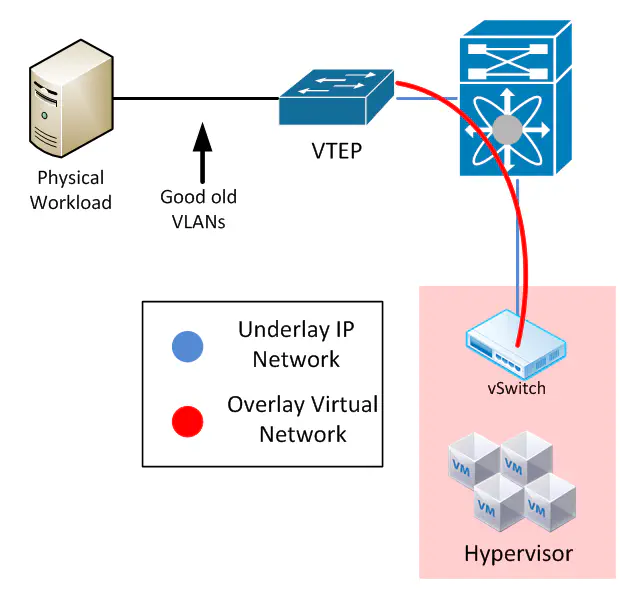

Two points here - first, the low-hanging fruit is the non-virtualized workloads. How do these integrate into this brave new world? Well, whether you’re talking VXLAN or whatever, you need some kind of device that speaks overlay. If you can’t speak overlay, and somehow terminate that network so that you can insert other traffic types inside, then you’re not doing much good. VTEP stands for VXLAN Tunnel End Point (thus VXLAN is a requirement here, but more on that later). One thing we can do with these is provide a sort of translation boundary between the overlay network and the physical devices. So you’d set up one port to participate in a VLAN of your choosing, plug your physical server in there, and configure the VTEP to inject that VLAN’s traffic into a VXLAN of your choosing.

Ultimately this should be the job of the SDN controller, since the VTEP is little more than a distributed linecard at this point.

We’ve been talking about this kind of device for some time, just not with this name. Think about what a device like this would be with a pure OpenFlow/OVSDB implementation (and it sounds like most announced VTEPs will also support OpenFlow/OVSDB). Same thing, just without the vXLAN tag. We plug physical devices in, but rather than rely on the flood and spray methodology of Ethernet or the standard forwarding behavior of routing protocols, we can define our own forwarding through a centralized controller. Same car, different engine.

These are a few of the tweets I picked up in the last week or so - they can help give some ideas for how hardware VTEPs are used and how they work.

@santinorizzo @networkingnerd @mierdin NSX has gateway VMs as soft VTEP or you have HW VTEP. Both support OpenFlow & OVSDB.

— EtherealMind (@etherealmind) August 30, 2013@etherealmind @santinorizzo @Mierdin Okay, so the plugins for phi VTEP participate in NSX. They offer visibility. Got it.

— Tom Hollingsworth (@networkingnerd) August 30, 2013@Mierdin @etherealmind Non NSX hosts can decap the vWire. Think MPLS PHP. Maybe NSX host agents down the road?

— Tom Hollingsworth (@networkingnerd) August 30, 2013The Role of the Software

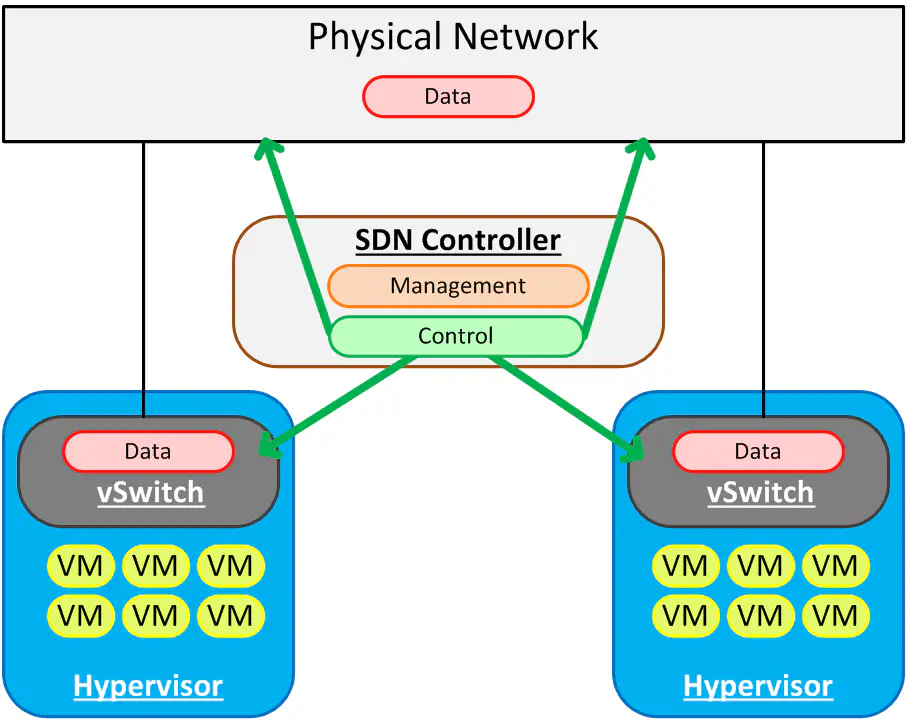

This is pretty simple. The software on top is what makes this all work. While technology like OpenFlow, OVSDB, vXLAN, etc. are cool to talk about, they’re just building blocks. Those same building blocks that are being used to do great things in one product can be used to do not-so-great things in another. SDN is about centralizing control so that we don’t have to spend time doing simple m/a/c requests when we could be doing it from a central intelligent point. I used this graphic in a previous post and it applies pretty well here. SDN started in the virtual realm because it was easy, but should absolutely encompass the physical world, as we saw in the previous section about VTEPs.

The controller needs to be aware of all of the overlay networks so that it can coordinate between hypervisors, and keep things straight.

This is also where we get implement nifty network services like distributed routing in the vSwitch, load balancing, firewalling, etc. etc. That’s ultimately the job of the controller, since we’ve now centralized the control plane. The data plane now just does what it’s told.

As a data center network engineer, I’ve spent a lot of time thinking about the “underlay”, or the physical infrastructure on which these overlays run. I have split this off into a third and final part, Part 3.